Parea AI Review

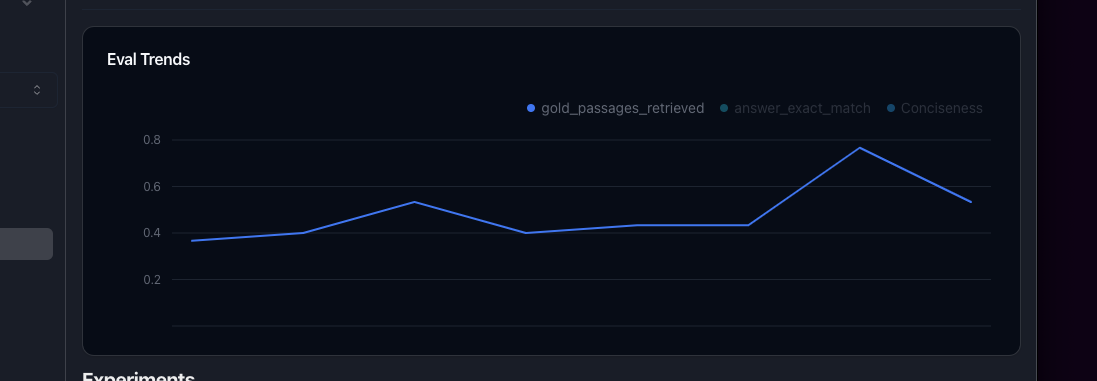

LLM experimentation and human annotation platform for AI teams to evaluate, test, and observe LLM apps before and after production.

Verdict

Parea AI provides a unified workspace for LLM teams covering experiment tracking, auto-generated domain-specific evaluations, observability, and human annotation workflows. It targets the growing need for rigorous pre-production testing of LLM applications, competing with tools like LangSmith and Weights & Biases Prompts. A free tier makes it accessible to individual developers, while its annotation and eval automation features give enterprise teams a structured path to confident production deployment.

What it does

Test and evaluate your AI

Best for

Parea AI is best for teams and enterprises looking to confidently ship LLM apps to production.

At a glance

Pros & cons

- Auto-creates domain-specific LLM evaluations

- Combines experiment tracking, observability, and human annotation

- Free tier available for solo developers

- Crowded space with established competitors (LangSmith, W&B)

- Enterprise feature depth may be overkill for small projects

- Relatively early-stage brand recognition

Related tools

Frequently asked

- Is Parea AI free to use?

- Yes. Parea AI has a free plan — Free tier available; paid plans for teams (exact pricing on site)

- Does Parea AI have memory?

- No persistent memory — sessions don't carry over by default.

- Can Parea AI do voice or images?

- Voice: no. Image generation: no.

- What are the best alternatives to Parea AI?

- Browse the AI Tools Directory for related tools.

Looking for an alternative?

MeMakie is an AI character chat platform with persistent memory, group chat, and a community feed of user-built characters. Free to start.

Try MeMakie → Browse more toolsNotes from users

Concrete observations only — pricing changes, real-world feature behavior, what didn't work for you. Vague hot-takes get filtered out by automated review. No links allowed.

No comments yet. Be the first to add a real-world note about Parea AI.